Description:

This project is based on the article Making Worlds by Steven Wittens, I recommend reading it first if you’re interested in planet rendering. The two main differences with this approach is that it’s not procedural but rather data based, and that it’s using tessellation instead of quadtree for LODing.

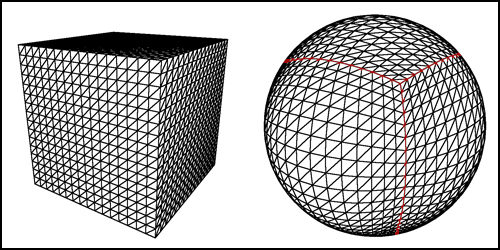

This image shows how you can use a normalized cube to make a sphere.

For the tessellation, I simply adapted Florian Boesch’s OpenGL 4 Tessellation to work with a cubic heightmap. It worked pretty much out of the box and allowed the culling to be performed on the GPU. The heightmap is generated in World Machine using a 2:1 width-to-height ratio, I then open it in Photoshop to apply some panoramic transformations. The whole process is described here. At first I was using HDRShop 1.0.3 just like in the tutorial but was only able to output the result as a RGB32 image. This loss of precision resulted in some rather nasty stepping artifacts when mapped on a terrain. I then moved to Flexify 2 and was able to output a proper 16bit greyscale image.

This image shows the final result before being split into tiles.

Known Bugs:

- Visible seams at the edge of the tiles, I’ll definitely fix this at some point.

- Patches can be culled out when the camera is too near and perpendicular to the terrain, this is related to the view frustum shape.

Tools Used:

Libs Used:

Source:

Builds:

Media: