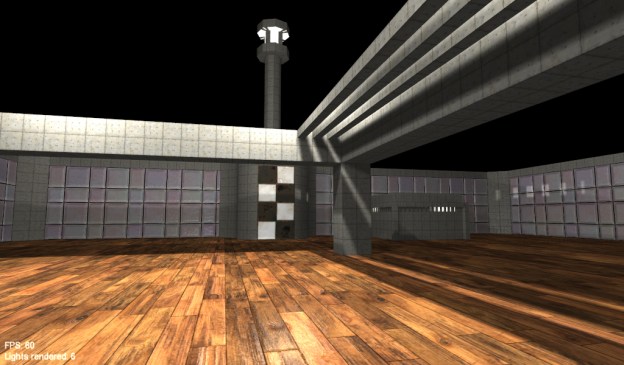

The demo implements a few new techniques but there’s still a lot more to do, right now there’s:

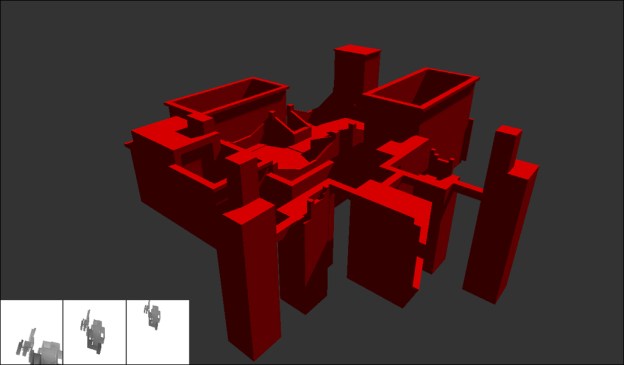

- Voxel Volume Generation

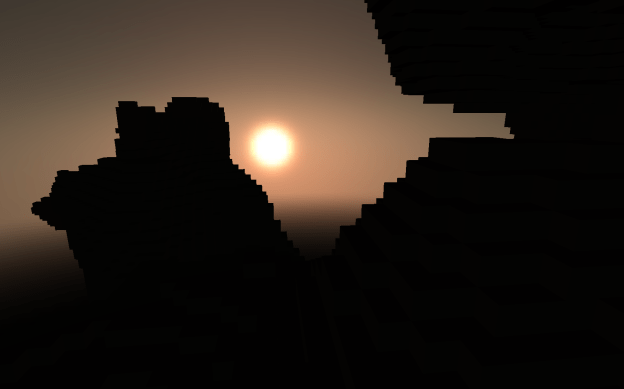

- Atmospheric Light Scattering / Irradiance Map

And still to implement:

- Motion Blur / Adaptive HDR

- Cascaded Shadow Mapping

- Maybe some AO

Builds:

- REV1 (less than 2mb)

Media: